The year competitive intelligence grew up

Competitive intelligence is no longer the back-office function it once was. In 2026, the discipline sits at the center of how modern organizations make pricing decisions, prioritize product roadmaps, defend deals, and brief their boards.

After three years of generative-AI adoption and a step-change in alternative-data availability, the gap between CI leaders and laggards has widened dramatically — and the cost of remaining in the latter group is now strategic, not merely operational.

This report synthesizes findings from a year-long study of CI practices across more than 600 organizations spanning technology, life sciences, financial services, industrial manufacturing, and consumer goods. It draws on practitioner interviews, vendor briefings, public filings, and primary survey data collected between July 2025 and March 2026. Our goal is not only to describe where the field is today, but to offer a candid view of where it is heading and what separates the programs delivering outsized impact from those that have stalled.

Five findings define the year

- AI is now table stakes.Roughly four in five mature CI programs report at least one production AI workflow — most commonly automated competitor monitoring, earnings-call summarization, or deal-desk battlecard generation. The conversation has moved from “should we use AI” to “how do we govern it.”

- Real-time has displaced quarterly.The annual SWOT deck is dying. Leading programs operate continuous signal pipelines that push curated competitor moves into Slack, Salesforce, and Microsoft Teams within minutes of detection.

- Alternative data has gone mainstream.Job postings, app-store telemetry, hiring signals, web traffic estimates, patent filings, and shipping records are now standard ingredients in competitor models — even at mid-market firms.

- Centralization is rebounding.After a decade of embedding analysts into product and sales teams, the pendulum is swinging back. Centralized CI functions reporting to a chief strategy officer, CFO, or CMO grew by an estimated 22 percent year over year.

- The trust problem is the next frontier.As AI-generated competitor profiles proliferate, executives are demanding provenance, citations, and confidence scoring. Programs that cannot show their work are losing credibility — and budget.

The organizations winning in 2026 are not necessarily those with the largest CI teams or the biggest tooling budgets. They are those that have re-conceived CI as a decision-support discipline first and a research function second — and have built the analyst skills, governance, and infrastructure to match.

Competitive intelligence has become a leading indicator of strategic agility. Treat it accordingly: fund it, govern it, and integrate it into the cadence of executive decision-making rather than the cadence of quarterly business reviews.

A discipline at an inflection point

Competitive intelligence has existed in some form for as long as commerce itself. What has changed — and changed dramatically in the past 36 months — is the volume of signal available to analysts, the speed at which decisions must be made, and the expectations leaders place on the function.

The competitive landscape has become both more transparent and more deceptive simultaneously: more transparent because public digital footprints are richer than ever, more deceptive because narrative warfare, astroturfed reviews, and synthetic content are now part of the standard competitive toolkit.

Against that backdrop, the 2026 CI practitioner is being asked to do more, faster, with smaller margins for error. Where a senior analyst once delivered four landscape assessments a year, that same analyst is now expected to maintain living competitor profiles, respond to ad-hoc executive questions within hours, support sales on competitive deals in real time, and contribute to scenario planning exercises that look five to ten years out.

Why this report, why now

Three converging shifts justify a fresh look at the discipline. First, generative AI has crossed from novelty to dependency in CI workflows — but its integration is uneven, and the variance in outcomes between thoughtful and reckless deployment is enormous. Second, the data ecosystem has expanded faster than most organizations’ governance frameworks, raising fresh ethical and legal questions about where intelligence ends and surveillance begins. Third, the geopolitical environment has made certain categories of competitor research — particularly across the U.S.–China, EU–U.S., and India–Gulf corridors — both more important and more fraught.

Our intent is to give CI leaders, the executives they serve, and the vendors who supply them a shared vocabulary and a shared map of where the discipline stands in 2026.

Methodology

Findings in this report are based on three streams of evidence collected between July 2025 and March 2026:

- Practitioner survey. 612 CI professionals across 41 countries completed a 64-question survey covering team structure, tooling, workflows, ethical practices, and self-reported impact. Respondents skew toward larger organizations (median company revenue: $1.4B) but include a meaningful long tail of mid-market firms.

- In-depth interviews. 78 semi-structured interviews with heads of CI, chief strategy officers, product leaders, and CI software vendors. Interviews ranged from 45 to 90 minutes and were conducted under Chatham House rules.

- Document and vendor analysis. Review of more than 200 publicly available CI program artifacts (job postings, RFPs, conference presentations, analyst-day decks) and structured briefings with 23 platform vendors.

All quantitative figures in this report are rounded for readability. Where statistics from third-party sources are cited, they are clearly attributed; all unattributed figures derive from our own primary research.

The 2026 competitive intelligence landscape

By any reasonable measure, the discipline is having its strongest decade. Budgets are up, headcount is up, and the share of CI programs reporting directly to a member of the executive committee has more than doubled since 2020.

Market size and momentum

The market for dedicated competitive and market intelligence software is now estimated to exceed $3.8 billion globally, growing at a compound annual rate in the high teens. That figure understates the true economic footprint of the discipline, which also encompasses adjacent spend on data subscriptions, primary research panels, internal headcount, and the increasingly significant cost of AI inference. Including these adjacent categories, organizations collectively spent an estimated $14–17 billion on competitive intelligence activities in 2025.

Growth is not evenly distributed. Three vendor categories — AI-native CI platforms, alternative-data marketplaces, and embedded sales-intelligence tools — accounted for nearly two-thirds of new spend. Legacy enterprise research platforms, by contrast, are growing in the low single digits and are increasingly displaced or absorbed into broader strategy suites.

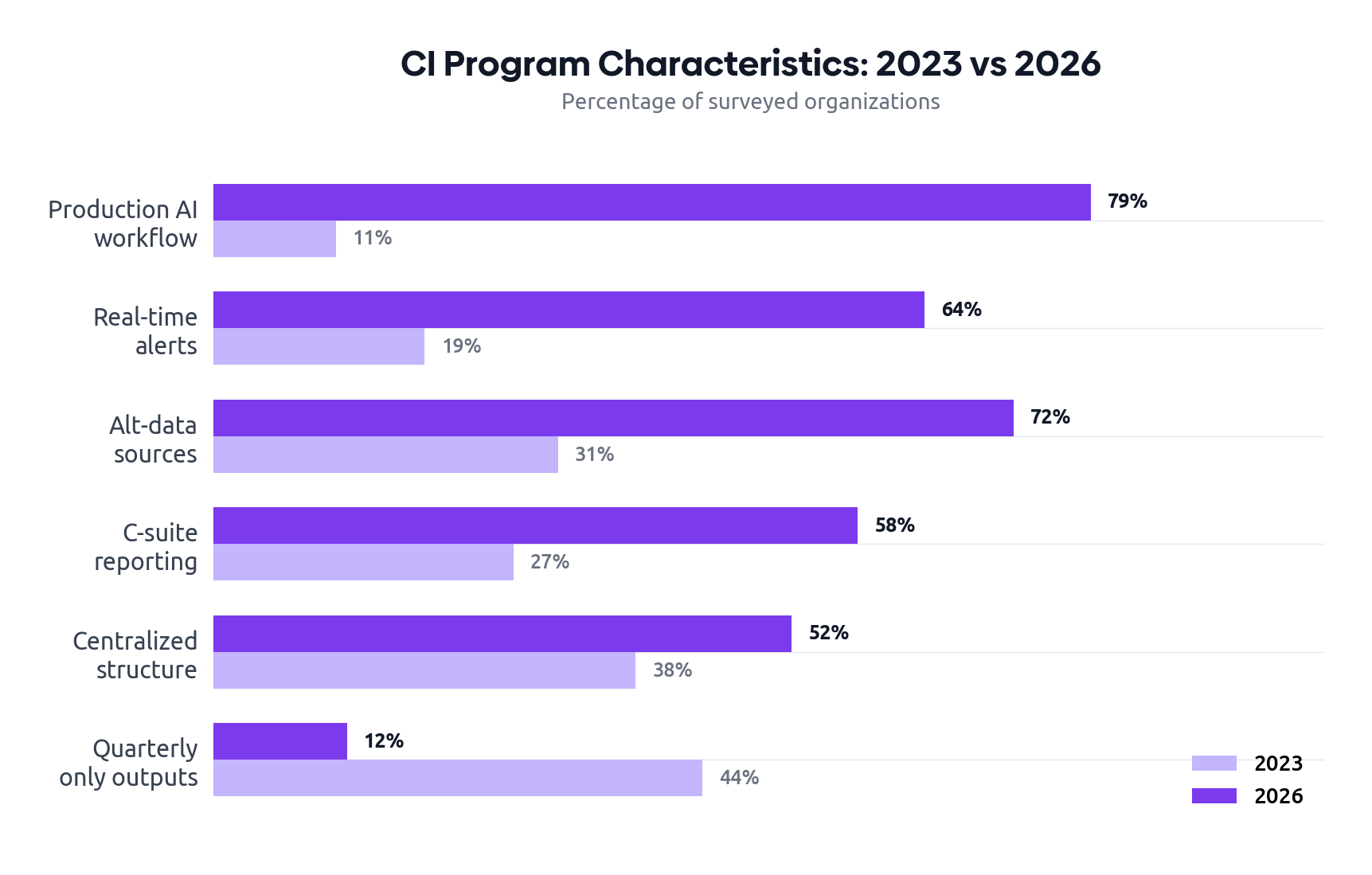

| Metric | 2023 | 2026 | Direction |

|---|---|---|---|

| Median CI team size (FTE) | 3.2 | 5.1 | ▲ up |

| Median annual CI budget (USD) | $510K | $890K | ▲ up |

| Programs reporting to C-suite | 27% | 58% | ▲ up |

| At least one production AI workflow | 11% | 79% | ▲ up |

| Producing real-time alerts | 19% | 64% | ▲ up |

| Producing only quarterly outputs | 44% | 12% | ▼ down |

| Use of external alt-data sources | 31% | 72% | ▲ up |

| Centralized reporting structure | 38% | 52% | ▲ up |

A maturity model for 2026

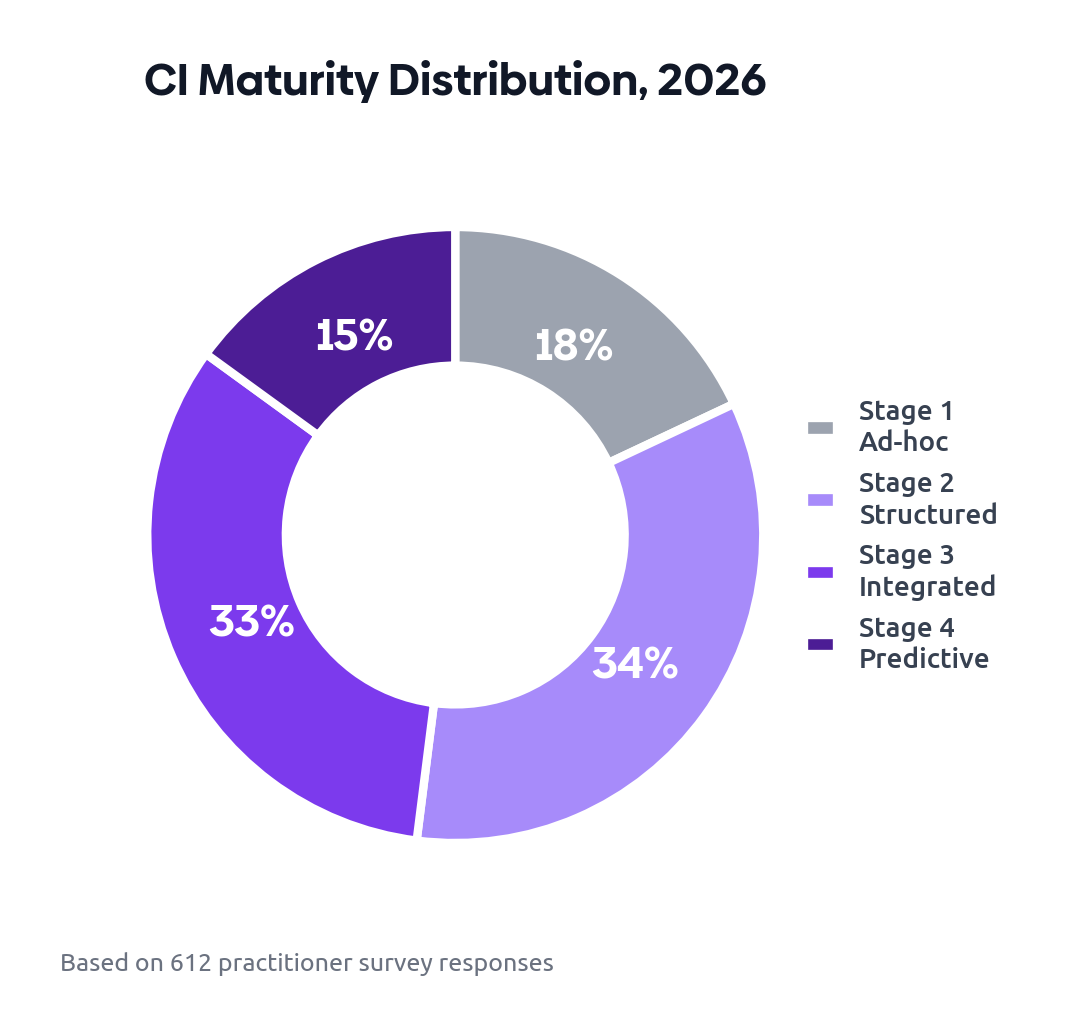

In our practitioner interviews, we found that organizations cluster into four recognizable stages of CI maturity. The boundaries are not crisp, and many programs straddle two adjacent stages, but the model is useful as a self-diagnostic. Crucially, maturity is not the same as size — we encountered 12-person CI teams operating at Stage 2, and four-person teams operating clearly at Stage 4.

- Stage 1 — Ad-hoc. No dedicated team. CI happens reactively when a deal is at risk or an executive asks a question. Outputs are typically a deck or a Slack message, with no archival discipline. Approximately 18% of surveyed organizations.

- Stage 2 — Structured. A small dedicated team produces recurring deliverables: monthly newsletters, quarterly landscape decks, battlecards. Workflows are largely manual. Approximately 34%.

- Stage 3 — Integrated. CI is embedded in product, sales, and strategy processes via systems of record. Real-time alerting is in place for tier-one competitors. Approximately 33%.

- Stage 4 — Predictive. The function moves beyond reporting to forecasting and scenario modeling. AI agents conduct continuous monitoring with human review. Outputs include probabilistic assessments and decision recommendations. Approximately 15%.

The most consequential gap is between Stages 2 and 3. It is the point at which CI either becomes a strategic capability or remains a research function. Crossing it requires investment in integration, governance, and — most often underestimated — analyst training.

The AI transformation

If a single force has reshaped competitive intelligence in the past three years, it is the operational maturity of large language models. The 2024 generation made it cheap to summarize. The 2025 generation made it cheap to reason. The 2026 generation has made it cheap to investigate.

From summarization to investigation

Three years ago, the most common AI workflow in CI was earnings-call summarization. It was useful but bounded: feed a transcript in, get bullet points out. Today, the median mature CI program runs investigations that would have been impossible without an analyst-week of effort: “Find every product announcement from these 14 competitors in the last quarter, classify them by feature area, identify which ones address gaps in our roadmap, and draft a one-page brief for the product council.” That workflow now takes 20 minutes, not a week.

This expansion of scope has changed the work of the analyst. Routine collection has been automated; what remains — and what is valued — is judgment, framing, and the ability to challenge the output.

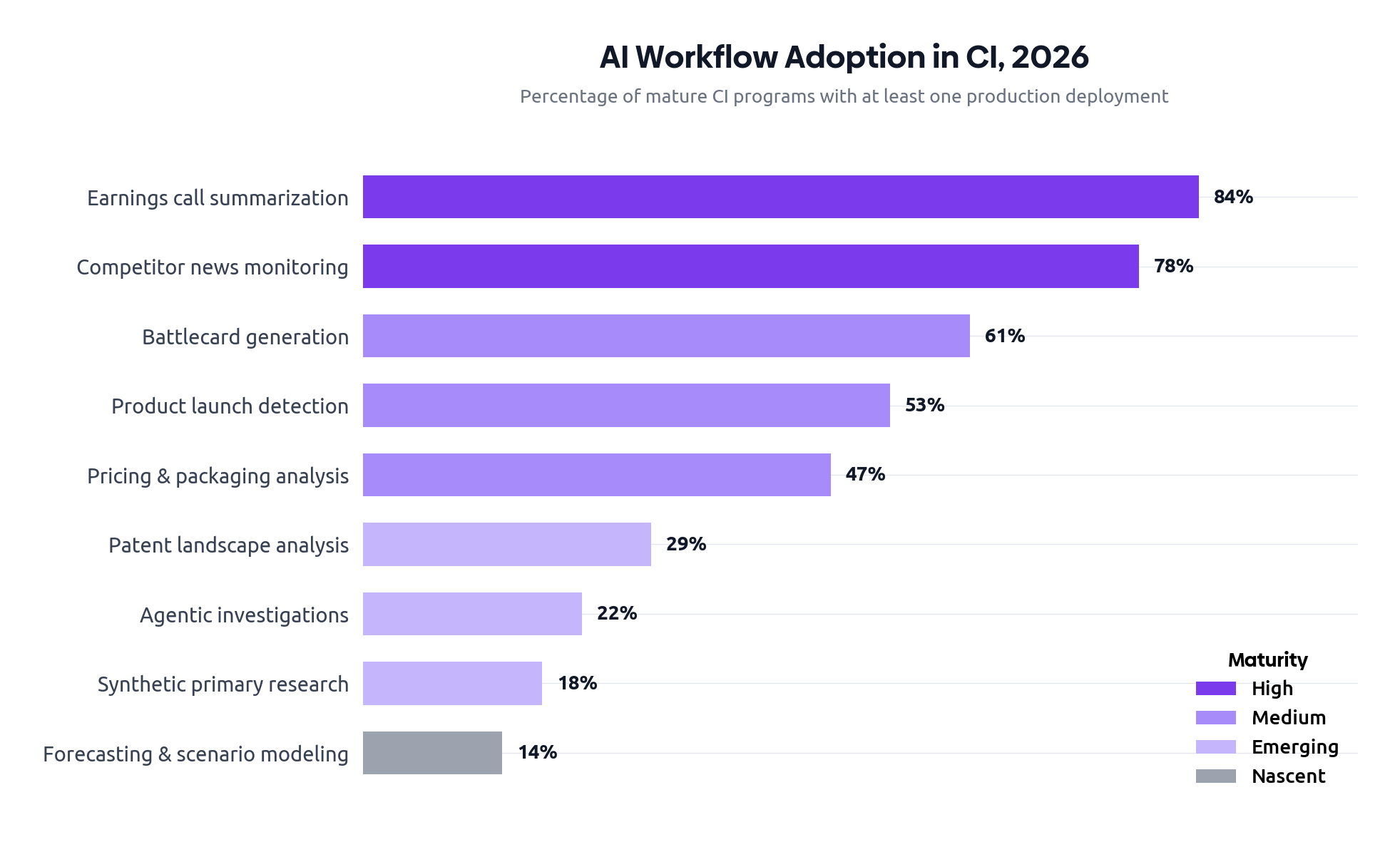

Where AI is actually being used

| Workflow | Adoption | Maturity | Risk profile |

|---|---|---|---|

| Earnings call & transcript summarization | 84% | High | Low |

| Competitor news monitoring & triage | 78% | High | Low |

| Battlecard generation & maintenance | 61% | Medium | Medium |

| Pricing & packaging analysis | 47% | Medium | Medium |

| Product launch detection & classification | 53% | Medium | Low |

| Patent landscape analysis | 29% | Emerging | Medium |

| Synthetic primary research (AI personas) | 18% | Emerging | High |

| Agentic deep-dive investigations | 22% | Emerging | High |

| Forecasting & scenario modeling | 14% | Nascent | High |

The governance gap

Adoption has outpaced governance. Only 41 percent of organizations with production AI in CI workflows have documented policies covering hallucination handling, citation standards, or human-in-the-loop checkpoints. Fewer than a quarter audit AI-generated outputs on any regular cadence. This is the most underappreciated risk in the discipline today.

The failure mode is rarely catastrophic; it is corrosive. An AI-generated competitor profile invents a leadership team. A pricing comparison hallucinates a tier that does not exist. A battlecard cites a discontinued product as current. Each error, taken alone, looks small. Cumulatively, they erode trust — and once sales reps stop trusting battlecards, the program is in serious trouble.

Leading programs treat AI outputs as draft, not final. They require source citations for every factual claim, enforce a human review gate before anything is published into a system of record, track an internal accuracy score over time, and publish a monthly “corrections register” alongside their outputs. None of this is glamorous; all of it is essential.

Disclosure: Segment8's platform enforces source-linked AI drafts with human approval gates as a core workflow — a design choice informed by the same governance patterns described here.

Agents: the 2026 story

The most discussed and least understood development of the past year has been the emergence of CI-specific agentic workflows: orchestrated chains of LLM calls, tool invocations, and retrieval steps that can perform multi-step research with minimal human intervention. In practice, today’s agents are best suited to bounded investigations — “build a profile of this newly funded competitor” — rather than open-ended research. They are not yet ready to replace senior analysts, but they have meaningfully changed what a single analyst can deliver in a day.

Our survey suggests that approximately 22 percent of programs have deployed at least one production agent, with another 35 percent piloting. Concerns center on cost (agents consume materially more inference than chatbot-style workflows), reproducibility (the same query can return different answers on different runs), and the difficulty of evaluating agent quality without robust ground-truth benchmarks.

Data sources and collection methods

Competitive intelligence has always been a data-pipeline problem dressed up as an insights discipline. In 2026, that pipeline is broader, faster, and more legally complex than ever.

The expanded source palette

Public web content remains the foundation, but its relative share of the input mix has been shrinking for three consecutive years. Replacing it are categories that did not exist as standard CI inputs five years ago: app-store telemetry providers, hiring-signal vendors, web-traffic estimators built on clickstream panels, patent and trademark databases with semantic search layers, shipping and trade-flow data, and a growing market for ethically sourced “alternative” datasets ranging from satellite imagery to corporate-card transaction panels.

The structural shift is that competitor behavior is now visible through dozens of refracted lenses, and triangulating across them yields conclusions no single source can support. A 30 percent jump in a competitor’s engineering job postings, combined with a sudden uptick in trademark filings in a new category, combined with executive movements out of an adjacent firm, tells a story that no single signal can. The discipline of CI is increasingly the discipline of fusing such signals.

| Source category | Adoption | Perceived value |

|---|---|---|

| Public news & press releases | 98% | Medium |

| Competitor websites & documentation | 96% | High |

| Earnings calls & filings (10-K, 10-Q) | 91% | High |

| Job postings & hiring signals | 74% | High |

| Patent & trademark filings | 62% | Medium |

| Social listening (LinkedIn, X, Reddit) | 71% | Medium |

| App-store telemetry & rankings | 47% | Medium |

| Web traffic estimators | 59% | Low–Medium |

| Customer/win-loss interviews | 68% | Very High |

| Shipping, trade & supply chain data | 21% | High |

| Conference talks, podcasts, webinars | 53% | Medium |

| AI-assisted synthesis of the above | 67% | High |

The collapse of “manual scraping”

Five years ago, much of competitive intelligence was assembled by analysts manually visiting competitor sites, screenshotting pricing pages, and pasting findings into spreadsheets. That practice has not disappeared, but it has been substantially displaced by purpose-built monitoring tools that watch hundreds of pages continuously and surface diffs. Legal uncertainty around scraping has accelerated the move toward licensed feeds and vendor-mediated access.

This shift has consequences. On the positive side, analysts spend less time on rote collection and more on synthesis. On the negative side, organizations have become dependent on vendor coverage decisions: if your monitoring vendor does not track a particular competitor’s developer documentation site, you may not know it has changed. Sophisticated programs maintain a “source map” that documents exactly which signals are covered by which tool, and where the gaps are.

Primary research is making a comeback

One of the more surprising findings of our research is the resurgence of investment in primary qualitative research: structured interviews with customers, prospects who chose a competitor, former employees of competitors (within ethical and legal bounds), and channel partners. After a decade in which programs leaned ever more heavily on digital signals, leading CI teams are reinvesting in the human conversation. The reason is straightforward: as AI commoditizes the synthesis of public information, the strategic edge increasingly lies in the proprietary information no one else has.

If three of your competitors could plausibly produce the same intelligence brief about a fourth competitor using only public sources, the brief is not actually intelligence — it is desk research. Strategic CI begins where public information ends.

Tools and technology landscape

The CI software market has consolidated and expanded simultaneously. Consolidated, in that several mid-market platforms have been acquired by enterprise strategy or sales-intelligence vendors. Expanded, in that the AI wave has created room for a new cohort of AI-native entrants that did not exist three years ago.

How buyers describe their stack

Among respondents at Stage 3 and Stage 4 maturity, the median CI program now uses between four and seven distinct tools — substantially more than the two or three typical in 2022. The growth is not random: programs are layering specialized tools (e.g., a dedicated patent intelligence platform) on top of a general monitoring and synthesis spine. The result is a coherent stack rather than a sprawl, but the integration burden is real and growing.

| Layer | Function | Buyer maturity |

|---|---|---|

| Monitoring & ingestion | Continuous tracking of web, news, filings, social | Universal |

| Synthesis & analysis (AI-native) | LLM-powered summarization, classification, briefing | Rapid adoption |

| Alternative data marketplaces | Job postings, traffic, app data, financial estimates | Maturing |

| Battlecard & enablement platforms | Sales-facing competitor content management | Stable |

| Specialized verticals (patent, regulatory) | Domain-specific intelligence | Sector-dependent |

| Win-loss & primary research tools | Structured customer/prospect interviews | Growing |

| Knowledge graph / system of record | Persistent competitor entity model | Emerging |

| Workflow & distribution | Slack, Teams, CRM integration | Universal |

The build-versus-buy question, revisited

For most of the discipline’s history, the buy decision was easy: dedicated CI platforms offered capabilities that no internal team could match, and the cost of building was prohibitive. The arrival of cheap, capable LLM APIs has complicated this calculus. We now meet sophisticated CI teams who have built large portions of their stack — particularly the synthesis and briefing layers — internally, often with two to four engineers embedded in or adjacent to the function.

Our view is that build-versus-buy is the wrong framing in 2026. The right framing is layer-by-layer. Monitoring and ingestion are still firmly buy: the infrastructure to maintain reliable coverage of thousands of sources is non-trivial and rarely strategic. Synthesis and analysis are increasingly buy + build: vendors provide the base, but high-performing programs build customizations on top. Knowledge graphs and systems of record are emerging as the layer where building offers real differentiation, because the data model encodes the program’s own theory of the market.

Integration is the underrated battleground

Across our interviews, the most common source of friction was not the capability of any individual tool, but the lack of integration between them. Competitor entities are defined differently in each system. A new product launch detected by the monitoring tool does not propagate to the battlecard platform without human intervention. The win-loss platform’s findings live in their own silo. Resolving these issues is mostly unglamorous data engineering — but it is where the next wave of program-level productivity gains will come from.

Programs that overinvest in synthesis tools without first investing in clean ingestion and entity resolution end up with elegant briefs built on inconsistent foundations. The most common pattern we observed in struggling programs: beautiful battlecards that contradict each other across deals because the underlying competitor records are not unified.

Organizational models and reporting lines

Where CI sits in the organization, and to whom it reports, has more bearing on its impact than almost any other structural variable. The wrong reporting line can render an otherwise sophisticated program invisible to the decisions it should be informing.

The pendulum has swung back toward centralization

Through the mid-2010s and early 2020s, the dominant trend was to embed CI analysts directly into product and sales organizations. The logic was sound — analysts closer to the work would produce more relevant intelligence — but in practice, the distributed model often resulted in fragmented coverage, duplicated effort, and inconsistent analytic standards. Embedded analysts were also disproportionately likely to drift toward enablement and sales support, leaving longer-term strategic questions unanswered.

In 2026, 52 percent of programs in our survey are centralized, up from 38 percent three years ago. Programs reporting to strategy or finance functions are roughly twice as likely to be invited into M&A and capital allocation discussions as those reporting into marketing or sales.

| Reporting line | Share of programs | Influence (1–5) |

|---|---|---|

| Chief Strategy Officer | 28% | 4.2 |

| CMO / Marketing | 21% | 3.4 |

| CFO / Finance | 14% | 4.0 |

| CEO (direct) | 11% | 4.5 |

| Chief Product Officer | 10% | 3.6 |

| Sales / Revenue | 9% | 3.1 |

| Other (incl. corp dev) | 7% | 3.8 |

The hub-and-spoke model

The most successful programs in our survey are not purely centralized or purely embedded — they are hub-and-spoke. A small central team owns the platform, governance, methodology, and the senior-most synthesis work. Embedded analysts or analyst-light roles sit within product lines, key sales segments, and corporate development. The hub sets standards; the spokes apply them in context. Critically, the embedded analysts dotted-line into the central team for professional development and quality review.

This model is not free. It requires more headcount than pure centralization, and it requires a center of gravity strong enough to prevent the spokes from going native. But the programs we interviewed with this structure consistently reported higher executive engagement, better coverage of long-tail competitors, and greater retention of analyst talent.

Team composition

The median CI team in 2026 has 5.1 full-time equivalents, but the variance is large: a fifth of programs operate with two or fewer FTEs, while another fifth have more than 12. Among teams of five or more, we see consistent role specialization: a lead/director, two to three senior analysts (often with industry specialization), an operations or research-engineering role focused on tooling and data plumbing, and one or more junior analysts. Fewer than half of teams have a dedicated AI/ML role today, but that share is rising fastest.

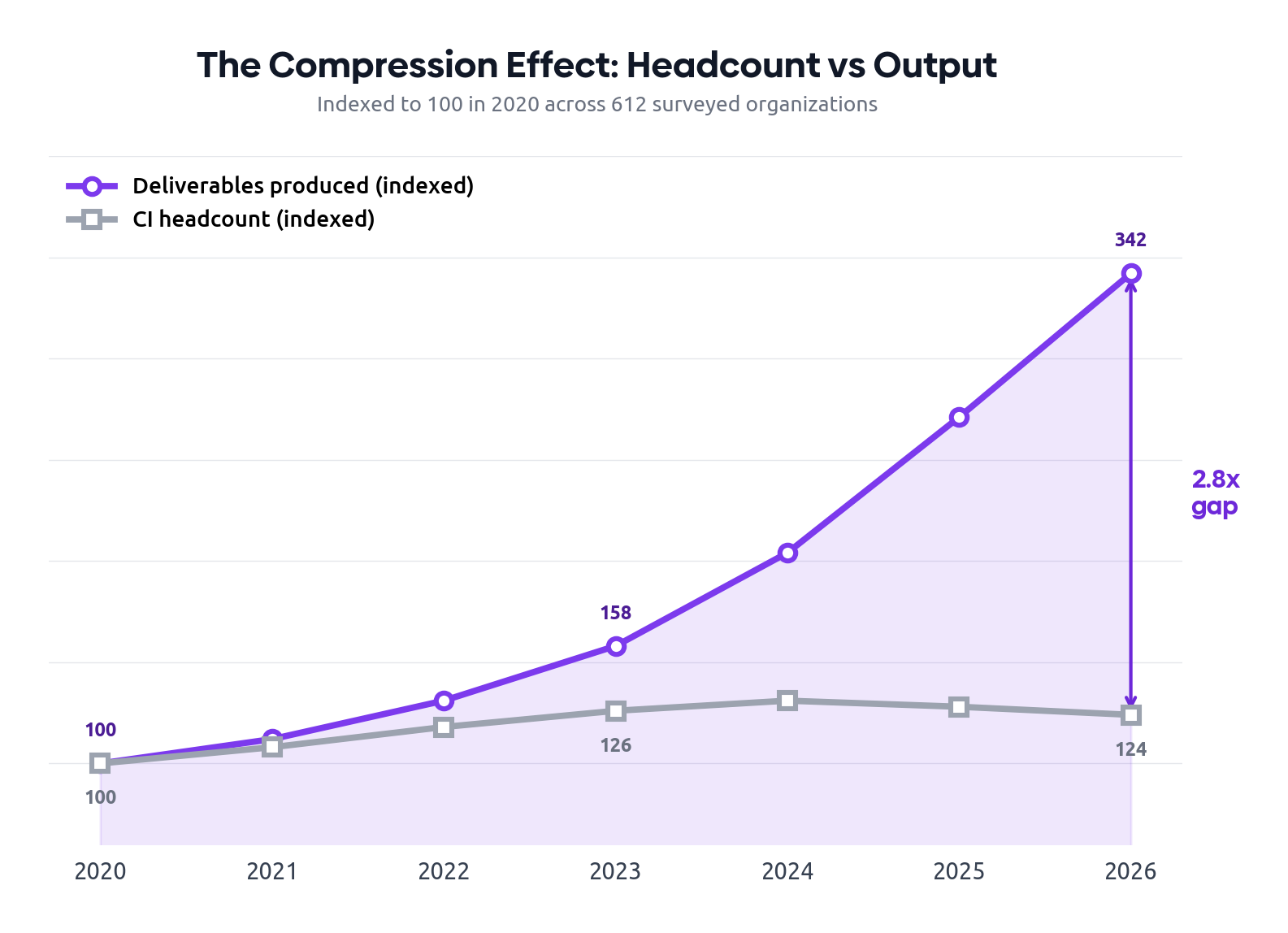

The compression effect: doing more with fewer people

The defining structural story of 2024–2026 is not growth — it is compression. CI headcount grew steadily from 2020 through 2023, peaking at an indexed value of roughly 131 (against a 2020 baseline of 100). Then it plateaued. In 2025 it contracted slightly, and in 2026 it contracted again. The median CI team is now 3 percent smaller than its 2024 peak.

Output, meanwhile, has exploded. The number of deliverables — battlecards, alerts, briefs, competitive assessments — produced by the average CI team has risen 3.4 times since 2020. Almost all of that acceleration came after 2023, coinciding with the operational deployment of LLM-powered workflows. The result is a 2.8× gap between headcount and output, and it is widening every quarter.

This compression is not evenly distributed. Stage 3 and Stage 4 programs — those with mature AI workflows — have absorbed headcount reductions while increasing output. Stage 1 and 2 programs, lacking the tooling to substitute, have simply shrunk. The gap between well-tooled and under-tooled programs is now as much an organizational equity issue as a technology one.

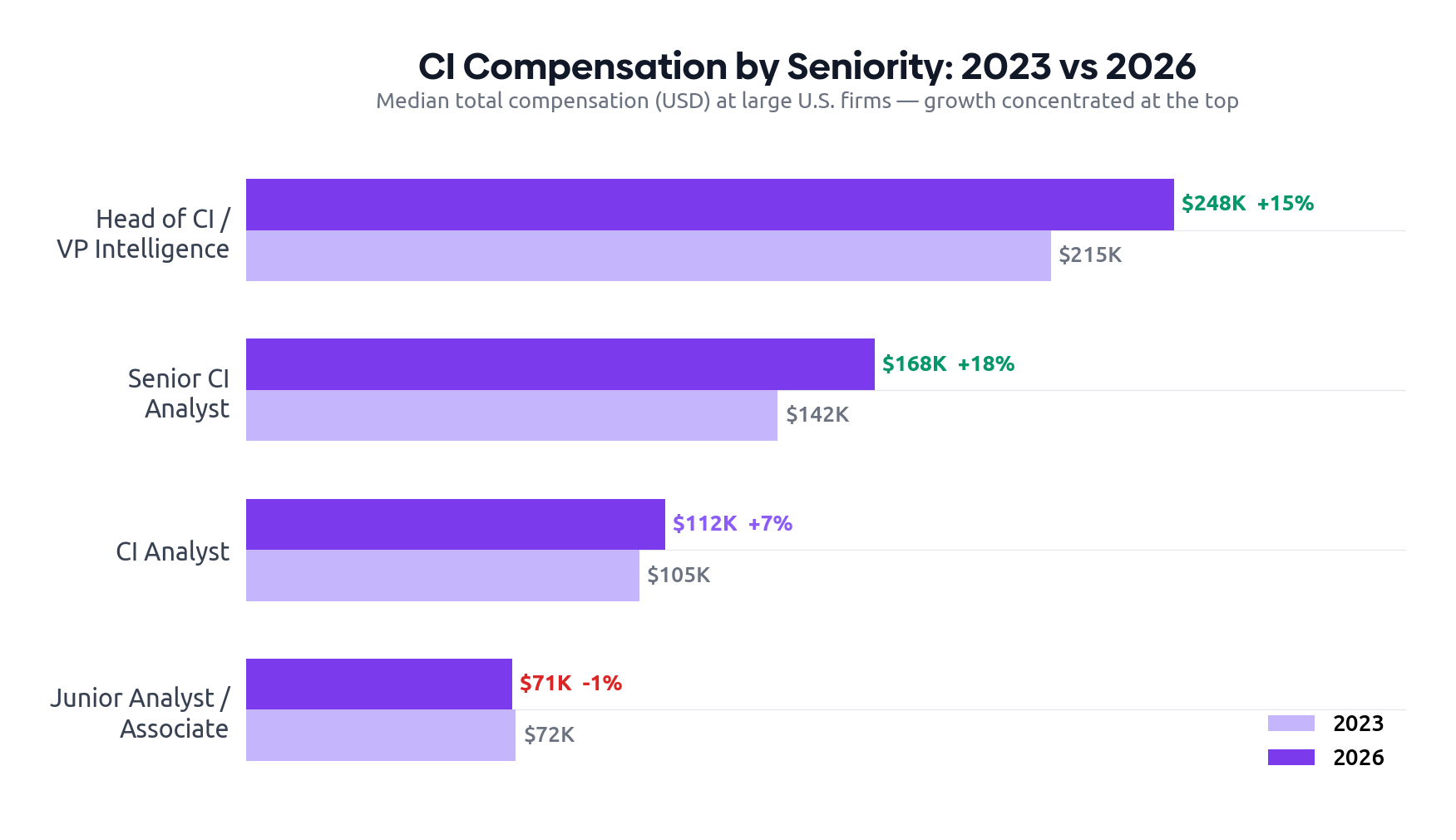

Salary trends: growth at the top, stagnation at the bottom

Compensation data tells the compression story from a different angle. Senior roles — Head of CI, VP of Intelligence — have seen robust salary growth of 15–18 percent over three years, driven by demand for leaders who can orchestrate AI-augmented teams and present to executive stakeholders. Senior analysts, particularly those with AI workflow skills, have seen comparable growth.

Junior and associate roles, by contrast, have stagnated. Median compensation for entry-level CI positions has been flat to slightly negative in real terms since 2023. The explanation is straightforward: the tasks that junior analysts historically performed — source monitoring, alert triage, first-pass summarization — are precisely the tasks most successfully automated. Organizations are hiring fewer juniors and paying them no more than before.

| Role | 2023 | 2026 | Change |

|---|---|---|---|

| Head of CI / VP Intelligence | $215K | $248K | ▲ +15% |

| Senior CI Analyst | $142K | $168K | ▲ +18% |

| CI Analyst | $105K | $112K | ▲ +7% |

| Junior Analyst / Associate | $72K | $71K | ▼ −1% |

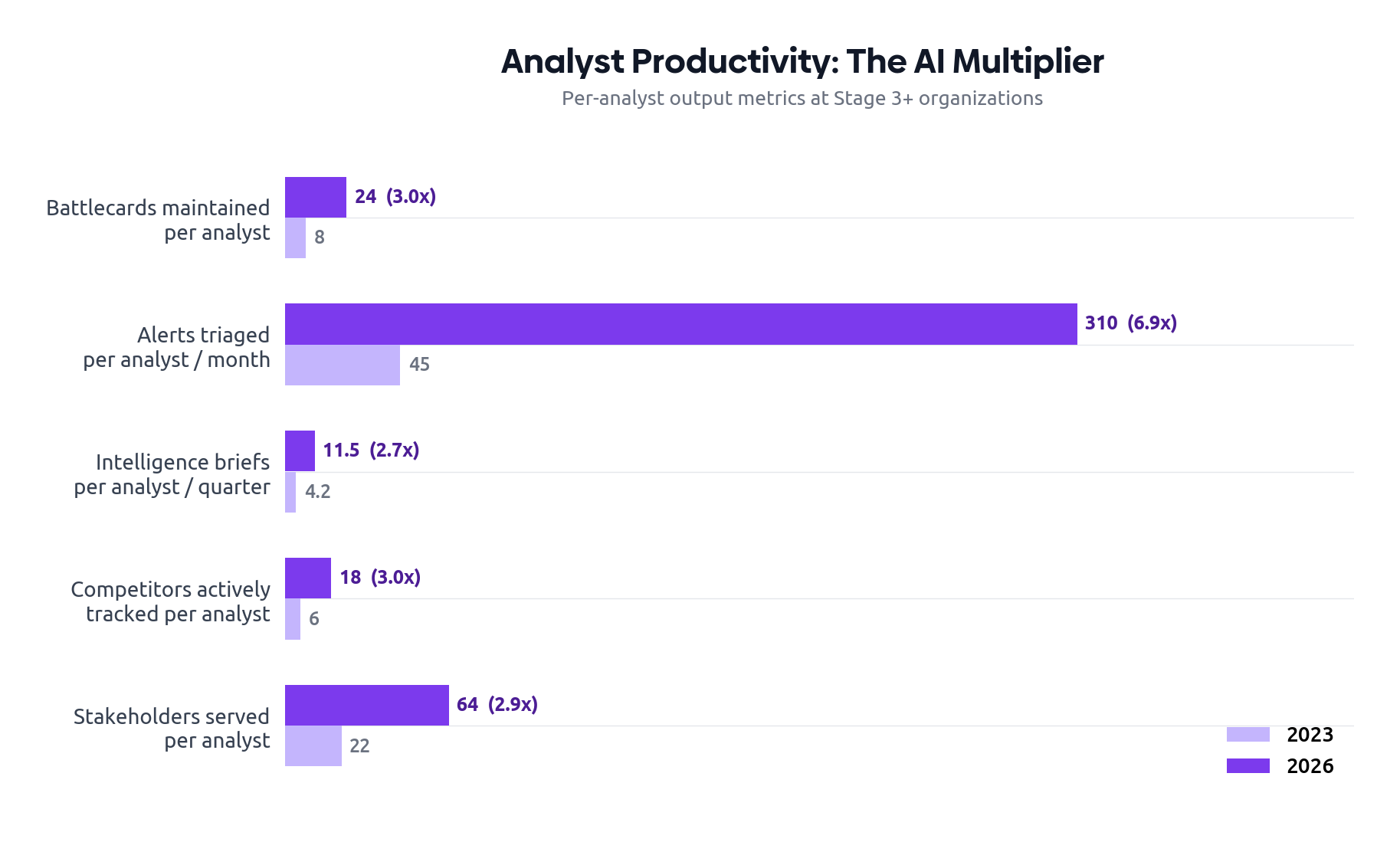

The productivity multiplier

The most striking measure of compression is per-analyst productivity. At Stage 3+ organizations with mature AI workflows, a single analyst now maintains three times as many battlecards, triages nearly seven times as many alerts, and serves three times as many internal stakeholders as their 2023 counterpart. The gains are largest in high-volume, structured tasks — precisely the workflows where AI assistance is most mature.

These gains come with an uncomfortable implication. If one analyst can do what three did before, the business case for growing the team is harder to make — even as the scope of competitive intelligence expands. Leaders we interviewed described this as the "productivity trap": the better your team performs per-head, the harder it is to justify additional headcount, even when strategic coverage gaps remain.

Teams that have compressed without investing in resilience — cross-training, documented workflows, manual fallback procedures — are one AI outage or one senior departure away from a coverage crisis. The most sophisticated programs treat compression as an opportunity to reallocate, not eliminate. They have reinvested freed capacity into primary research, scenario modeling, and executive engagement — the high-judgment work that AI cannot yet perform. The least sophisticated programs have simply pocketed the headcount savings. We expect the consequences to become visible within 12–18 months.

Platforms like Segment8 are purpose-built for this compressed reality — AI agents handle signal triage and battlecard maintenance, freeing analysts to focus on the strategic work that justifies their seat at the table.

Industry-specific trends

The general patterns described in this report apply across sectors, but the specifics — what gets monitored, how intelligence is consumed, and what counts as a strategic question — vary significantly. Below we summarize what is distinctive in five industries that together account for roughly 70 percent of dedicated CI spend.

Technology and SaaS

Technology firms remain the most mature CI buyers and the most demanding. Product launch cadence is fast, pricing changes are frequent, and the competitive set is unstable: new entrants emerge weekly, and incumbents can be disrupted by a single funding event. The 2026 SaaS CI program is heavily oriented toward real-time monitoring, with strong integrations into product management and sales enablement. AI adoption is highest in this sector — 91 percent of tech CI programs are in production with at least one AI workflow, versus 79 percent overall.

The most acute pain point in tech CI is the proliferation of competitors that are too small to track individually but too numerous to ignore. Leading programs respond with tiered coverage models: tier-one competitors get deep, continuous treatment; tier-two competitors get automated monitoring with quarterly review; the long tail is tracked by AI agents and surfaced only on signal.

Pharmaceuticals and life sciences

CI in pharma operates on a fundamentally different clock. Drug development timelines, clinical trial readouts, regulatory filings, and patent expirations dominate the analytic agenda, and the relevant time horizons are measured in years rather than weeks. The discipline is deeply intertwined with regulatory affairs and is among the most mature consumers of specialized intelligence platforms — clinical trial trackers, patent analytics, and conference abstract monitoring services.

The most distinctive 2026 trend in pharma CI is the integration of AI-assisted scientific literature review into competitive workflows. What used to require a medical writer’s week now takes hours, freeing analysts to focus on strategic implication.

Financial services

Financial services CI is bifurcated. Retail-facing institutions (banks, insurers, wealth platforms) operate competitive intelligence functions that increasingly resemble those of consumer-tech companies — heavy in pricing intelligence, app feature comparison, and digital customer experience benchmarking. Capital markets and institutional businesses, in contrast, run intelligence functions oriented around regulatory developments, client wallet share, and senior banker movements.

Across both, the discipline has been reshaped by the supervisory uses of AI. Many institutions now treat AI-assisted competitor analysis with extra scrutiny under model risk management frameworks. This has not slowed adoption but has substantially changed how CI teams document their work.

Consumer goods and retail

CPG and retail CI revolves around assortment, pricing, promotional cadence, and shelf or digital placement. The data ecosystem here is unusually rich: third-party scanner data, retail audit panels, price-comparison services, and e-commerce monitoring tools provide deep visibility. The 2026 frontier in this sector is the integration of social and creator signals into product strategy: which TikTok trends are driving competitor SKU launches, which influencer cohorts are shifting allegiance, which review patterns indicate a competitor’s product is in quality trouble.

Industrial and manufacturing

Industrial CI lags the sectors above in tooling maturity but is catching up rapidly. The discipline is shaped by long sales cycles, technical specifications, and complex channel structures. Patent analysis, trade flow data, and engineering job posting signals are particularly valuable. Many industrial programs are still oriented toward annual or semi-annual deliverables, which represents both a limitation and an opportunity: the gap between leaders and laggards within this sector is the largest of any we studied.

In every industry, the programs reporting the highest executive engagement share a single trait: they have made themselves indispensable to one specific recurring decision — quarterly pricing reviews, the product council, the M&A pipeline, or the annual operating plan. Generalist programs without a flagship decision to support consistently struggle to justify their budgets.

Ethical and legal considerations

Competitive intelligence has always operated in a zone where what is technically possible, what is legally permissible, and what is ethically appropriate are three different things. The expansion of the data ecosystem and the rise of AI have widened that zone, and not in ways that simplify it.

The four-quadrant test

In our interviews, we found that mature programs apply something like a four-quadrant test to any new collection method or data source:

- Is it lawful in the jurisdictions where we operate?

- Does it comply with the terms of service of the platforms involved?

- Would we be comfortable if our methods were described in detail to our own customers?

- Would we be comfortable if a competitor used the same methods against us?

A practice that fails any one of these tests should generally be avoided. This is not legalistic over-caution. We documented multiple recent cases where CI programs, having operated within the letter of the law, faced reputational consequences because their methods looked predatory in hindsight. The clearest example: programs that used AI-generated synthetic personas to extract information from competitors’ sales teams. Legal in most jurisdictions, but a public relations disaster when the practice came to light.

Privacy and data protection

Privacy regimes have continued to tighten globally. GDPR in Europe, the patchwork of U.S. state laws, India’s DPDP regime, Brazil’s LGPD, and a growing list of Asian and Middle Eastern frameworks have collectively raised the bar on what CI programs can do with personal data. Programs that scrape public profile data, infer organizational structures from public posts, or maintain dossiers on individual competitor employees should expect increasing scrutiny.

The pragmatic response we see among leading programs is to keep personal information at the periphery of the intelligence model, not at its center. Tracking that a competitor has hired a senior engineer with a particular specialization is generally fine. Building a detailed psychological profile of that engineer’s likely strategic priorities is not.

Geopolitical complications

Cross-border competitive intelligence has become significantly more sensitive. Programs operating in or against firms based in the U.S., China, Russia, or jurisdictions under sanctions face overlapping export-control, sanctions, and national-security frameworks that did not meaningfully apply to CI work a decade ago. Several of our interviewees described cases where standard competitive research practices were re-examined by legal counsel and curtailed because of potential national-security implications.

Skills, talent, and the changing analyst profile

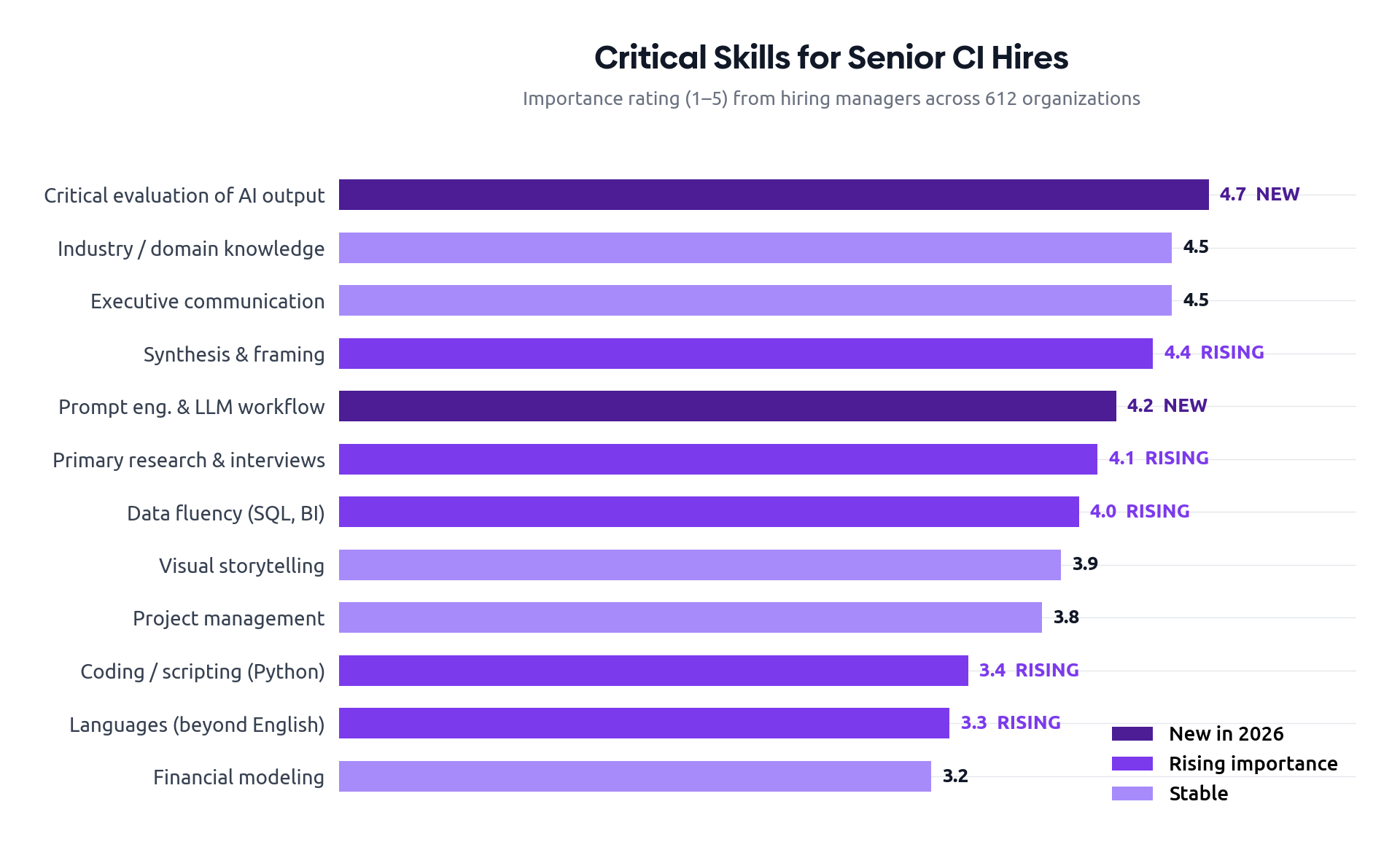

The composition of CI talent is shifting faster than any other dimension of the discipline. The senior analyst of 2020 was a researcher, communicator, and synthesizer. The senior analyst of 2026 is all of those things — plus a workflow designer, a prompt engineer, and a critic of AI outputs.

Which skills now command a premium

Our survey asked hiring managers to rate the relative importance of 18 skills when hiring CI analysts at the senior level. The results show a clear reordering. Traditional skills (industry knowledge, executive communication, primary research) remain near the top but have been joined by a cluster of newer competencies that did not appear in surveys before 2023.

| Skill | Importance (1–5) | vs 2023 |

|---|---|---|

| Critical evaluation of AI-generated output | 4.7 | ▲ new |

| Industry / domain knowledge | 4.5 | — |

| Executive communication | 4.5 | — |

| Synthesis & framing | 4.4 | ▲ |

| Prompt engineering & LLM workflow design | 4.2 | ▲ new |

| Primary research & interviewing | 4.1 | ▲ |

| Data fluency (SQL, BI tools) | 4.0 | ▲ |

| Visual storytelling | 3.9 | — |

| Project management | 3.8 | — |

| Coding / scripting (Python) | 3.4 | ▲ |

| Languages (beyond English) | 3.3 | ▲ |

| Financial modeling | 3.2 | — |

The hiring funnel is changing

Three years ago, a typical CI hire came from one of three backgrounds: management consulting, equity research, or product marketing. Today, that pool has expanded to include investigative journalists, data scientists, and — increasingly — practitioners with mixed backgrounds combining industry expertise with applied AI experience. Roughly a fifth of senior CI hires in our 2026 cohort came from outside the traditional pipelines, up from 6 percent in 2022.

Compensation has tracked these shifts. Median total compensation for a senior CI analyst at a large U.S. firm has risen 28 percent over three years, with the strongest growth at the intersection of CI and applied AI. CI leaders we interviewed widely reported that retention has become harder, particularly of analysts who have built strong AI workflow skills and are now in demand outside the function.

Training and certification

Formal certifications remain a minor factor in hiring, but the picture is changing. Internal upskilling programs, particularly in AI workflow design and ethical CI practice, are the fastest-growing category of CI training spend. Several large organizations have launched in-house CI academies with curricula that look more like a hybrid of journalism school and data science bootcamp than the traditional research-methods training of a decade ago.

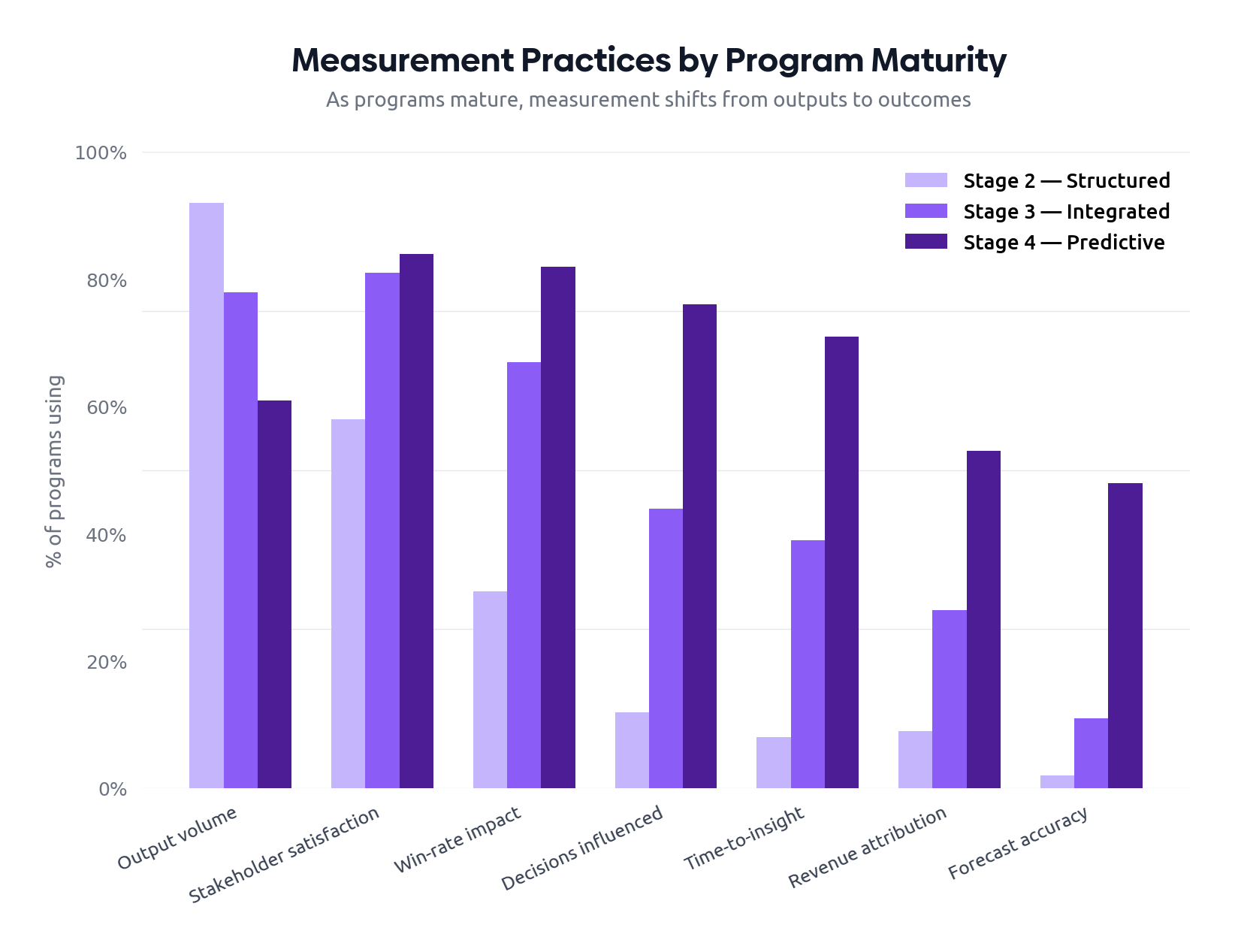

Measuring impact and demonstrating value

Competitive intelligence has long struggled with the measurement problem. Unlike marketing, sales, or product analytics, CI has no native conversion event. The value of preventing a bad decision is real but unattributable. The value of accelerating a good decision is real but diffuse.

The shift from outputs to outcomes

The most consequential change in 2026 measurement practice is the move away from output metrics (number of briefs produced, number of battlecards maintained, number of alerts sent) toward outcome metrics tied to specific business decisions. The output metrics have not disappeared — they remain useful operationally — but they are increasingly relegated to internal management dashboards rather than the executive narrative.

| Measurement approach | Stage 2 | Stage 3 | Stage 4 |

|---|---|---|---|

| Volume of outputs delivered | 92% | 78% | 61% |

| Stakeholder satisfaction surveys | 58% | 81% | 84% |

| Win-rate impact on competitive deals | 31% | 67% | 82% |

| Decisions influenced (tracked register) | 12% | 44% | 76% |

| Time-to-insight metrics | 8% | 39% | 71% |

| Confidence-weighted forecast accuracy | 2% | 11% | 48% |

| Estimated revenue impact attribution | 9% | 28% | 53% |

The decisions-influenced register

The single practice most associated with strong executive engagement in our research was the maintenance of a “decisions-influenced register” — a structured log, often as simple as a spreadsheet, in which the CI team documents specific decisions where their work materially shaped the outcome. Entries typically include the decision, the analytical contribution, the executive sponsor, and the eventual outcome where known. Reviewed quarterly with leadership, this register transforms the conversation about CI value from anecdote into evidence.

One organization we interviewed maintains a register with 47 entries over the past 18 months, ranging from a $40M pricing decision in which CI provided the competitor benchmark, to the abandonment of an acquisition target after CI identified post-merger integration risks. The CFO reviews it quarterly. Budget conversations, predictably, have become shorter.

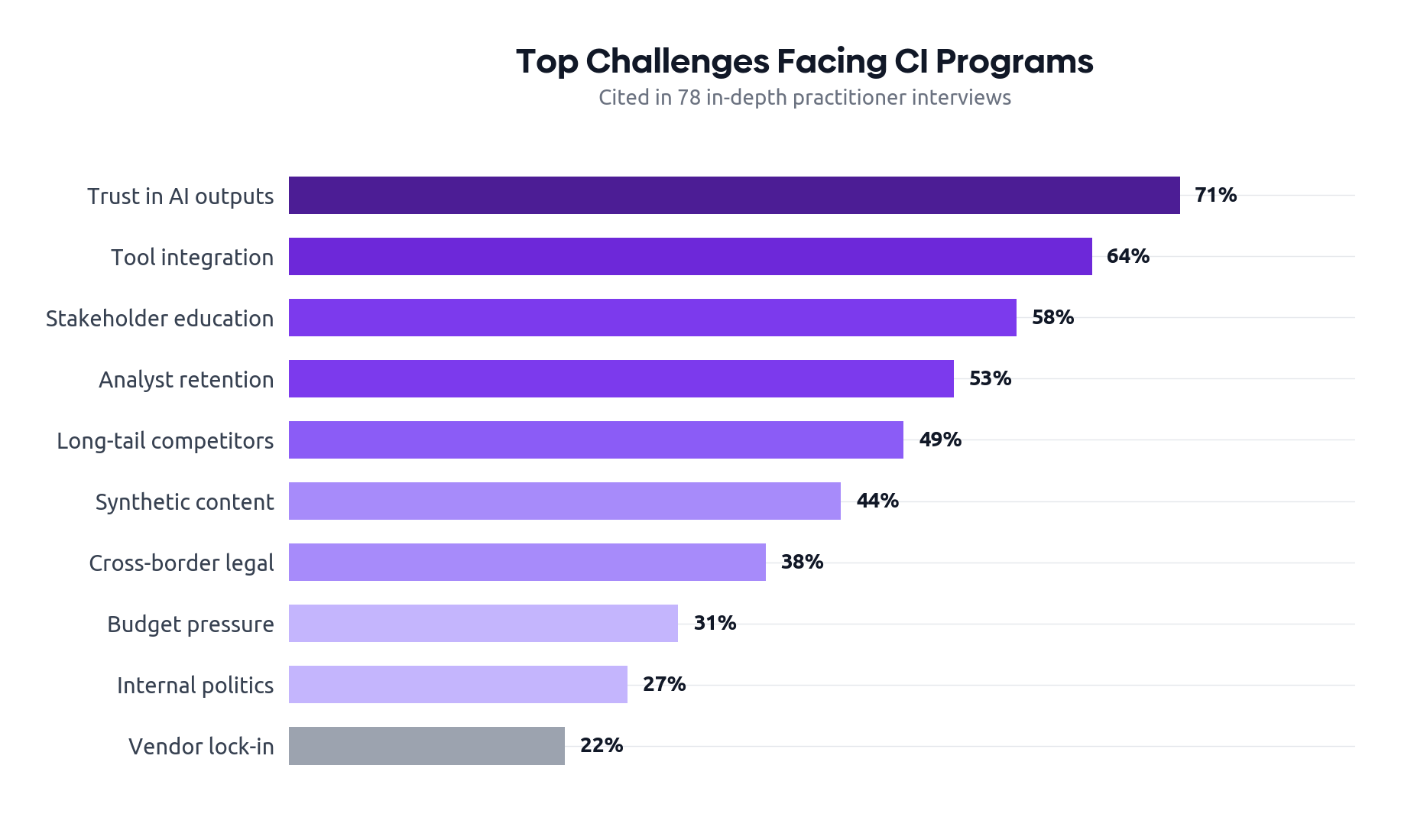

The top challenges programs are facing

Across our 78 in-depth interviews, a consistent set of challenges surfaced. What is striking is how few of the top challenges are about access to data or capability of tools — the historical CI pain points. The 2026 challenges are increasingly about governance, integration, and the relationship between CI and the rest of the business.

- Trust in AI-generated outputs (71% of interviews). How to maintain quality when the volume of generated content exceeds human review capacity.

- Integration across tools and systems (64%). Reconciling competitor entities, surfacing intelligence in the systems where decisions are made, and avoiding duplicated effort across teams.

- Stakeholder education (58%). Helping executives ask better questions and consume intelligence in ways that produce decisions rather than discussion.

- Retention of skilled analysts (53%). Particularly those with strong AI workflow skills, who are aggressively recruited by adjacent functions.

- Coverage of long-tail competitors (49%). The proliferation of small but credible new entrants, especially in software, that cannot be ignored but cannot be tracked individually.

- Distinguishing signal from synthetic content (44%). Astroturfed reviews, AI-generated competitor blog posts, and other forms of narrative warfare make naive monitoring increasingly unreliable.

- Cross-border legal and regulatory friction (38%). Particularly for global programs operating across the U.S., EU, China, and India.

- Budget pressure (31%). Mentioned far less often than in pre-2024 surveys, but still present, particularly in mid-market organizations.

- Internal political resistance from product or sales (27%). Where central CI is perceived to be encroaching on territory previously owned by other functions.

- Vendor lock-in concerns (22%). Particularly around platforms whose AI capabilities make extraction of historical analysis difficult.

The pattern is instructive. Where the 2020 challenge list was dominated by data access and tooling, the 2026 list is dominated by organizational and governance issues. This is, on balance, a sign of progress — these are the challenges of a discipline that has the resources it needs and is now figuring out how to use them well.

The road ahead: 2027–2030

Predictions in a fast-moving discipline are humbling exercises. We offer the following not as forecasts but as the directional bets that most consistently emerged from our practitioner and vendor interviews.

Six developments we expect

- The end of the standalone CI report.By 2028, we expect the static intelligence brief to be largely displaced by interactive, queryable intelligence environments. Executives will ask questions of an intelligence system rather than read documents prepared in anticipation of their questions.

- Embedded forecasting becomes routine.Probabilistic assessment of competitor moves — not just description of them — will move from the leading edge into standard practice. Programs that cannot quantify uncertainty will appear quaintly underequipped.

- An audited intelligence standard emerges.Just as financial reporting evolved auditing standards, we expect at least one major industry to develop voluntary standards for AI-assisted intelligence, including provenance, citation, and confidence-scoring requirements. Pharmaceuticals and financial services are the likely first movers.

- CI reshapes M&A workflows.The ability of AI-assisted CI to dramatically compress diligence timelines will change how deals are evaluated, particularly in serial-acquirer organizations. CI teams will be invited into deal rooms earlier and more often.

- The talent pool continues to diversify.Expect more analysts from investigative journalism, applied AI research, intelligence-community, and design-thinking backgrounds. The pure-consulting pipeline will remain important but will no longer dominate.

- Defensive CI becomes its own sub-discipline.As AI-assisted competitive analysis becomes universal, organizations will invest in understanding what others can infer about them. Expect the rise of “CI hygiene” practices: deliberate management of public information emissions, executive-team digital footprints, and the signals leaked through job postings and patent filings.

What we are less confident about

Three open questions remain genuinely contested in the practitioner community. First, whether the consolidation among CI platforms will continue or whether AI-native upstarts will keep the market fragmented. Second, whether the move toward centralized programs will reverse if AI capabilities push intelligence work back into the line of business. Third, whether the legal and regulatory environment for competitive data will tighten or loosen as governments grapple with the dual-use nature of these tools. We deliberately do not take strong positions on these questions; we expect to revisit them in next year’s edition.

Strategic recommendations

The recommendations below are organized for three audiences: CI leaders, the executives who sponsor them, and the vendors who serve them. Each is intended to be specific enough to act on within a quarter.

For CI leaders

- Stand up a decisions-influenced register this quarter. A simple log of decisions your work has shaped, reviewed quarterly with your executive sponsor, is the single highest-ROI change you can make to how your program is perceived.

- Adopt a documented AI governance policy. Cover citation standards, hallucination handling, human review gates, and accuracy auditing. The policy can be one page; what matters is that it exists and is followed.

- Audit your source map. List every source feeding your program, the tool that provides it, the competitors covered, and the gaps. Most programs discover meaningful blind spots in this exercise.

- Reinvest in primary research. As AI commoditizes public-source synthesis, the strategic edge moves toward proprietary information. Target a meaningful share of analyst time on win-loss, former-employee, and customer interviews.

- Build the integration backbone. Unify competitor entities across your monitoring, battlecard, CRM, and win-loss systems. The work is unglamorous; the leverage is large.

For executives sponsoring CI

- Anchor the program to a recurring decision. The most influential CI programs are indispensable to one specific cadence — pricing reviews, product council, M&A pipeline, annual planning. If your program is not yet indispensable to such a process, sponsor that integration deliberately.

- Demand provenance. Every claim in every intelligence brief should be sourced. If your program cannot show its work, you do not yet know how reliable its work is.

- Promote talent visibility. CI analysts who present directly to executive forums grow faster and stay longer. Make these forums genuinely accessible rather than mediated through layers of management.

- Fund the integration work. The unspectacular plumbing — data engineering, entity resolution, system integrations — generates more durable value than another headline tool purchase.

For vendors serving CI

- Solve the provenance problem credibly. The next generation of platform differentiation will be earned by tools that make AI outputs auditable, citable, and reviewable, not by tools that make them merely faster.

- Compete on integration, not features. Customers are increasingly buying for fit into a stack rather than for standalone capability. APIs, entity-resolution support, and partner ecosystems will outweigh feature checklists.

- Invest in customer success for the analyst role. The analyst, not the buyer, determines whether your tool gets used. Tooling-adjacent training and workflow design support are increasingly bundled by leading vendors.

The organizations winning at competitive intelligence in 2026 are not the ones with the most data, the largest teams, or the fanciest tools. They are the ones whose CI function has become a trusted, indispensable input to specific recurring decisions. Everything else — the AI workflows, the alternative data, the platforms — is in service of that trust. Build the trust, and the rest follows.

This report is published by Segment8, the competitive intelligence platform that connects market signals to deal outcomes — from raw signal to finished battlecard, grounded in real win/loss data. Try it free →

About this report

The State of Competitive Intelligence 2026 is an annual research report intended for senior leaders of CI programs, executives who sponsor them, and the broader ecosystem of advisors and vendors serving the discipline. It is the result of approximately ten months of research conducted between July 2025 and March 2026.

Authorship and acknowledgements

This report was produced under Chatham House rules with respect to the 78 interviews that informed it. Survey respondents participated voluntarily and were not compensated. We are grateful to every practitioner who contributed time, insight, and honest reflection on what is working and what is not in their programs. Any errors of fact or judgment are ours alone.

A note on figures

Quantitative figures presented as percentages or counts derive from the 612-respondent practitioner survey unless otherwise attributed. Market-sizing figures are estimates synthesizing public vendor disclosures, industry analyst reports, and our own modelling; they are presented as ranges where appropriate and should be treated as directional rather than authoritative.

End of Report

This report may be cited as: “The State of Competitive Intelligence 2026,” Segment8 Annual Industry Report, May 2026.